robots.txt emulator: Block URLs Based on robots.txt Rules

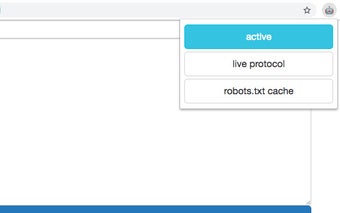

robots.txt emulator is a Chrome extension that allows users to block URLs based on the rules defined in the robots.txt file on specific hosts. This extension is designed to provide a convenient way to see what URLs are being blocked, modify the robots.txt file in real-time, and take advantage of its compatibility with Chrome 41.

With robots.txt emulator, users can easily prevent access to certain URLs according to the rules set in the robots.txt file. This can be useful for website owners who want to control search engine crawlers' access to specific pages or files. The extension provides a user-friendly interface that allows users to view and edit the robots.txt file on the fly, making it easy to customize the rules to suit their needs.

One of the standout features of robots.txt emulator is its compatibility with Chrome 41. This means that users who are using older versions of Chrome can still take advantage of this extension's functionality. Whether you're a website owner or a developer, robots.txt emulator offers a convenient solution for managing URL access based on robots.txt rules.